How to Actually Update Your Model

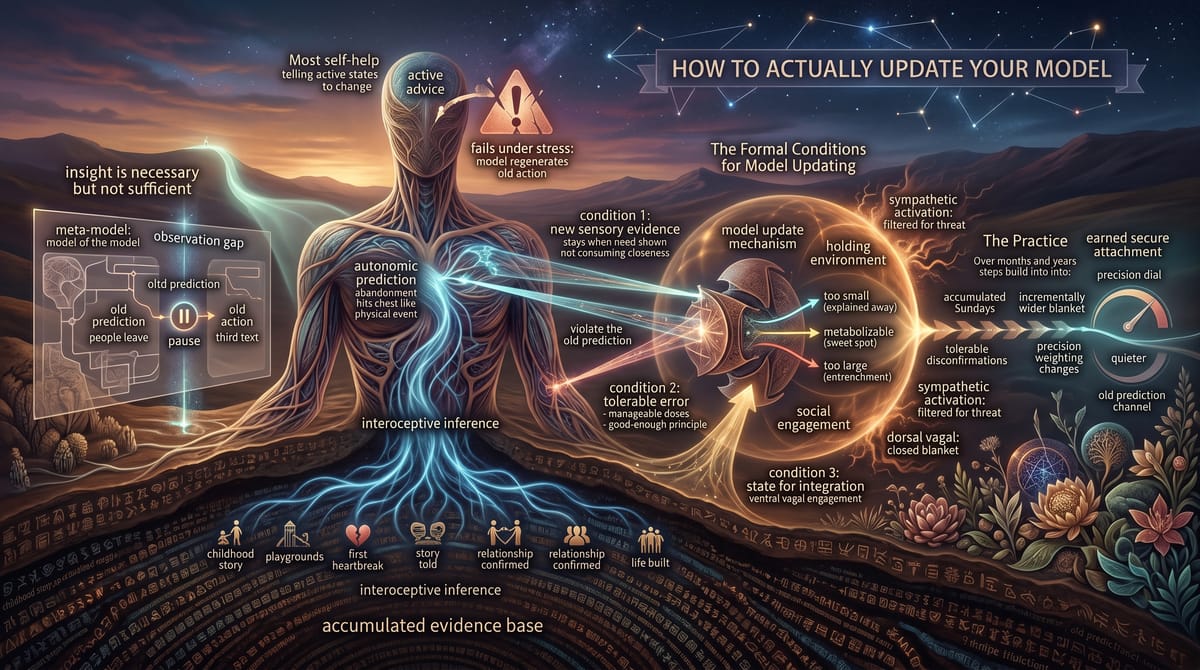

You understand the framework now. Your attachment style is a prediction strategy. Your precision weighting was set in the holding environment. Your attraction patterns are your prediction engine chasing the errors it was trained to chase. You can see the mechanism.

And seeing the mechanism changes nothing.

This is the most important sentence in the book, and it’s the one most self-help gets wrong. Insight is not intervention. Understanding your pattern is not the same as changing it. You can read every book on attachment theory, map your entire relational history through the lens of active inference, explain your avoidance or anxiety with perfect computational precision, and still react the exact same way the next time your partner doesn’t text back. Still feel the same flood. Still do the same thing.

This isn’t a failure of understanding. It’s the architecture of the prediction engine. The model that generates your attachment predictions doesn’t live in the part of your brain that reads books. It lives in the body. In interoceptive inference. In the autonomic predictions that fire before conscious awareness has a chance to weigh in. You can know you’re anxiously attached and still feel the abandonment prediction hit your chest like a physical event, because knowing and predicting are different systems, and the prediction engine doesn’t update through knowledge. It updates through experience.

So how does it actually update?

Why Insight Is Necessary but Not Sufficient

Let’s be precise about what insight does and doesn’t do.

Insight gives you something invaluable: a meta-model. A model of the model. When you understand that your anxiety is a precision-weighting problem rather than an accurate read on reality, you gain the ability to observe the prediction as it fires rather than being wholly captured by it. You can watch the error signal land and say, that’s my prediction engine running the old model, rather than treating the error as truth.

This matters. It creates a gap between prediction and action. In that gap lives the possibility of choosing a different response. Not a different feeling; the feeling is generated by the model, and the model doesn’t care what you know about it. But a different action. The anxious person can feel the abandonment prediction fire and choose not to send the third text. The avoidant person can feel the blanket thicken and choose to stay in the room anyway.

But the gap is not the update. The gap is the pause between the old prediction and the old action. The prediction itself is still firing. The model is still generating the same output. Insight gives you a better relationship with the model; it doesn’t change the model.

Dan Siegel calls this “mindsight”; the capacity to observe your own internal processes. It’s a prerequisite for model updating because you can’t change something you can’t see. But observation and change are different operations. You can observe a prediction engine running and still not change its parameters. The parameters change through a different mechanism entirely.

The Formal Conditions for Model Updating

Active inference is specific about how generative models update. Three conditions have to be met simultaneously, and most self-help advice meets one at best.

Condition 1: New sensory evidence that violates the old prediction.

The model updates when it receives data that contradicts its existing predictions. If your model says “people leave when I show need,” the updating data is a person who stays when you show need. If your model says “closeness leads to engulfment,” the updating data is closeness that doesn’t consume you. The new evidence has to specifically contradict the old prediction; general positivity doesn’t do it. The prediction engine isn’t updated by good experiences in general. It’s updated by good experiences that target the specific prediction that’s miscalibrated.

This is why “just being in a good relationship” doesn’t automatically fix attachment patterns. The good relationship provides lots of positive evidence, but if that evidence doesn’t specifically address the prediction that’s driving the attachment strategy, the prediction engine files it away without updating the relevant model. The anxious person can be in a loving, stable relationship and still run the abandonment prediction every time the partner goes quiet, because “going quiet” is the specific trigger and “being generally wonderful” doesn’t address it.

Condition 2: The violation has to be tolerable.

This is the condition most people miss. The prediction error generated by the new evidence has to fall within a range the system can metabolize. Too small and the system doesn’t register it as a genuine violation; it gets explained away, absorbed into the existing model without changing anything. Too large and the system doubles down.

This is counterintuitive. You’d think a massive disconfirmation of the old model would produce the biggest update. It doesn’t. It produces entrenchment. When the prediction error is too large; when the gap between the old model and the new evidence is too vast; the system treats the new evidence as an anomaly rather than as data. It tightens the old model rather than revising it. This is why “just date a nice person” doesn’t fix anxious attachment. The gap between the anxious model (“people are unreliable, I have to monitor constantly”) and the secure partner’s behavior (“I’m here, consistently, without drama”) is so large that the prediction engine can’t integrate it. Instead, it generates a new prediction: this can’t be real. This will end. Something is wrong that I can’t see yet. The old model survives by discounting the new evidence.

Tolerable prediction error is Winnicott’s good-enough principle applied to adult change. The good-enough mother doesn’t eliminate prediction error; she provides it in manageable doses. The same principle applies to model updating at any age. The new evidence has to be close enough to the existing model that the system can stretch to accommodate it, but different enough that the stretch actually occurs.

Condition 3: The system has to be in a state where it can integrate new data.

This is the holding environment condition. The most perfectly calibrated new evidence, arriving at the most perfectly tolerable dose, does nothing if the prediction engine is running in defense mode.

When the system is in sympathetic activation, it’s processing data through a threat model. New evidence gets filtered through the question: is this dangerous? The updating question; does this change my model?; isn’t running. The system is mobilized. It’s not in a state where it can sit with a prediction error and let it revise anything.

When the system is in dorsal vagal, it’s not processing relational data at all. The blanket has closed. The social engagement channels are offline. New evidence doesn’t even arrive at the prediction engine because the sensory channels that would carry it are shut down.

Model updating requires ventral vagal engagement. The system has to be in the state where it can receive social data, process prediction errors, and assign appropriate precision to the new evidence. This means the holding environment; the relational conditions that keep the system in ventral vagal; has to be present during the updating experience. It’s not enough for the new evidence to exist. It has to be received by a system that is regulated enough to do something with it.

This is why therapy takes time and why the therapeutic relationship matters more than the therapeutic technique. The therapist provides a holding environment. A regulated nervous system that keeps the client’s system in ventral vagal while the old predictions get gently violated. The technique is the specific prediction error being introduced. The relationship is the holding environment that makes the error tolerable. Without the holding environment, the technique bounces off a closed blanket.

What This Looks Like in Practice

Earned secure attachment; the clinical term for moving from insecure to secure attachment in adulthood; is not a single event. It’s not a breakthrough session. It’s not the moment your partner proved they wouldn’t leave. It’s a process that looks, computationally, like incremental model updating over an extended period.

For the anxiously attached person, it looks something like this. You’re in a relationship with someone who is reliably present. Not perfectly present; reliably present. They go quiet sometimes. Your prediction engine fires the old model: they’re pulling away, something is wrong, I need to check. You feel the surge. The prediction error is there, loud and visceral. But instead of acting on it; instead of sending the text, making the bid, testing for reassurance; you sit with it. You stay in the gap that insight created.

And then the partner comes back. Not because you pulled them back. Because they were never gone. They were just quiet. The old prediction (“silence means abandonment”) gets a small, specific, tolerable disconfirmation. The new evidence (“silence sometimes means nothing”) arrives at the prediction engine while the system is regulated enough to process it. The model doesn’t rewrite itself in that moment. But it shifts, incrementally, one data point.

Do this a hundred times. A thousand times. Each time, the old prediction fires. Each time, the new evidence arrives. Each time, the model shifts a fraction. Over months and years, the precision weighting changes. The abandonment channel gets a little quieter. The system learns, through accumulated experience, that this particular person’s silence is not the same signal the old model was trained on. The prediction engine recalibrates.

For the avoidant person, the process runs differently. They’re in a relationship with someone who makes bids for connection. Each bid arrives at the blanket. The system’s first response is to deflect; low-precision processing, minimal engagement, the familiar thickening. But instead of deflecting completely, they let one signal through. Not because they want to. Because they’ve committed to the practice of letting one signal through.

The bid lands. The partner is asking about their day, and instead of the reflexive “fine,” the avoidant person says something real. A small thing. An honest thing. And the partner receives it. The partner doesn’t overwhelm them. Doesn’t push for more. Doesn’t turn the opening into a demand for total access. The old prediction (“letting signals in leads to engulfment”) gets a small, tolerable disconfirmation. Opening the blanket didn’t produce the catastrophe the model predicted.

Do this a hundred times. Each time, the blanket opens a fraction wider. Each time, the system learns that some relational signals can be processed without overwhelm. The precision on social data nudges upward. Not to the level of the anxious person’s screaming sensitivity. Just enough that bids for connection register. Just enough that the partner’s love can get through.

There’s a third scenario worth describing because it’s the one nobody writes about: the person whose model is mostly functional but carries one specific miscalibration that keeps detonating in otherwise good relationships. They’re not anxious across the board. They’re not avoidant across the board. But they have one trigger; one specific class of prediction error; that sends the system into a response disproportionate to the input. Maybe it’s the moment their partner mentions an ex. Maybe it’s the tone that sounds like dismissal. Maybe it’s the specific combination of physical distance and silence that maps, precisely, onto a scene from a relationship they thought they’d processed.

This is the most common presentation in functional adults, and it’s the hardest to recognize because the rest of the system runs fine. The model updating here is targeted. The person doesn’t need a general recalibration; they need a hundred tolerable disconfirmations of one specific prediction. The partner mentions an ex and doesn’t compare the two. The tone that sounded like dismissal turns out to be fatigue. The silence was just silence. Each time, the specific prediction fires and the specific evidence says no. Over time, that one channel recalibrates. The system learns to assign appropriate precision to that one class of signal rather than the inflated precision the old experience installed.

Why Most Advice Fails

Most self-help advice about attachment amounts to: act like a secure person. Communicate your needs. Don’t play games. Choose healthy partners. Don’t repeat your patterns.

In the active inference framework, this is telling someone to change their active states without updating the generative model that produces those active states. It’s like telling someone with a phobia to “just act confident around spiders.” The action is the output of a model. Change the action without changing the model and the model keeps regenerating the old action. It’s exhausting, it feels fake, and it collapses the moment stress arrives and the system drops out of conscious control and back into automatic prediction.

The accumulation problem makes this worse than most people realize. The model you’re trying to update wasn’t installed by one experience. It was built by hundreds of experiences across decades; the holding environment, the playground, the first heartbreak, the story you told about it, the next relationship, the confirmation, the next confirmation, the life you built around the configuration. You’re not overwriting one data point. You’re overwriting an entire evidence base. The model feels true because it has been true, in every environment the model selected for itself. “Just act secure” is asking you to ignore twenty years of accumulated evidence on the basis of a paragraph you read in a book. The prediction engine is not stupid. It knows what it knows. The update has to match the depth of the original training.

The advice that works targets the model, not the action. It says: put yourself in environments where the old prediction gets gently, repeatedly, tolerably violated, while a holding environment keeps your system regulated enough to integrate the new data. That’s therapy. That’s a specific kind of relationship. That’s a daily practice of sitting with prediction errors and letting the model update instead of acting on the old one.

It’s slow. It’s not dramatic. It doesn’t feel like a revelation. It feels like practicing scales on a piano; the same exercise, repeated, building a capacity that only becomes visible over time. But the capacity is real. The precision weights shift. The model updates. And one day you notice that the old prediction fired and it was quieter this time. Not silent. Quieter. And you stayed in the gap a little longer. And the new evidence had a little more room to land.

That’s earned security. Not a personality transplant. A recalibration of the prediction engine, one tolerable error at a time, in the presence of a holding environment that makes the errors survivable.