Afterword: On Being a System That Knows It's a System

There’s a moment, if the framework lands for you, where something shifts.

You’re in the middle of a conversation with your partner. Or you’re lying in bed at 2am running scenarios. Or you’re at a family dinner watching yourself react. And instead of being inside the reaction, fully captured by the prediction and the error and the feeling, you’re slightly above it. Watching it happen. Noticing the machinery.

That’s my prediction engine generating a threat model.

That error signal is from the old environment, not this one.

My precision weighting on this channel is too high right now; the signal is loud but the evidence is thin.

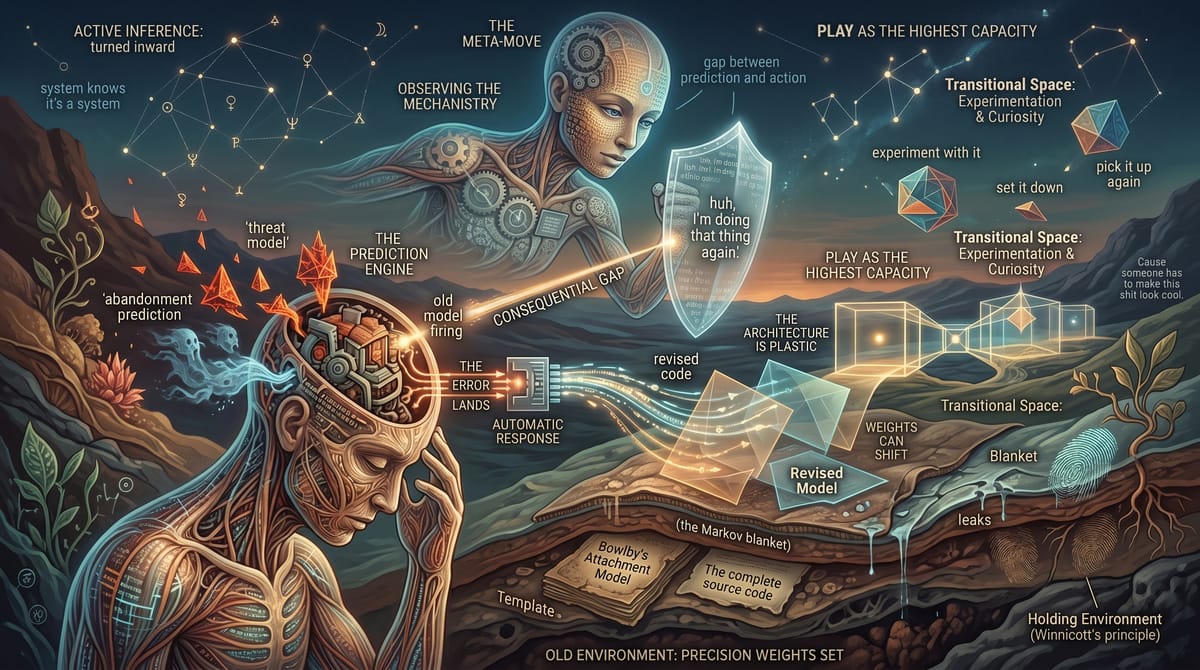

This is the meta-move. The thing that active inference makes possible when you turn it inward. You’re not just a prediction engine. You’re a prediction engine that can observe its own predictions. You’re a system that knows it’s a system.

This sounds like a small thing. It’s not. Most people spend their entire lives fully identified with their model’s output. The prediction fires; they feel the feeling; they act on it. Prediction, error, action. No gap. No observation. No moment where the system notices itself and says, huh, I’m doing that thing again. The software runs and the user never sees the code.

What this book has been, more than anything, is an invitation to read the code while it’s running. Not the complete source; that’s a lifetime of work, a stack of academic papers, a few decades of therapy if you’re honest about it. But the readable version. The version that shows you where the major loops are, where the precision weights got set, where the blanket leaks, where the holding environment left its fingerprints.

And once you’ve read it, something is different. You can’t unread it. The predictions still fire; they will always fire, because that’s what prediction engines do. But now there’s a witness. A part of you that watches the prediction arrive, watches the error land, watches the system start its automatic response, and in that watching, creates the smallest, most consequential gap in the world: the gap between the prediction and what you do next.

Winnicott would have liked this, I think. He usually doesn’t get credit for it. Most people in the attachment conversation know Bowlby’s name; he built the theory, he ran the research programs, he changed how we think about the parent-child bond. Fewer people know that Winnicott supervised Bowlby’s psychoanalytic training, that the two were parallel pioneers who occasionally clashed but whose ideas cross-pollinated in ways the field has never fully acknowledged. Winnicott’s work on play, holding, and good-enough environments ran alongside attachment theory, not upstream of it exactly, but in the adjacent channel that gave the theory its deepest clinical texture. Winnicott never got the brand. He got something better; he got the ideas that make the brand work. And the idea that matters most here is play.

Because what I’ve just described; the capacity to observe your own model, to hold it as an object of curiosity rather than being wholly captured by it, to experiment with it and set it down and pick it up again; that’s play.

Not play as recreation. Play as the highest capacity of the self. The ability to hold your own experience in transitional space; it’s yours, it’s real, it shapes your world, and also you can examine it, you can turn it over, you can ask what if the model is wrong? without the asking feeling like a threat to your existence.

A prediction engine that can’t observe itself is a machine. A prediction engine that can observe itself is something else. Something that can choose, within the constraints of its architecture and its history and its holding environments, what to do with what it sees.

That’s what I wanted to give you with this book. Not a fix. Not a cure. Not the illusion that understanding the mechanism gives you mastery over it. The mechanism is bigger than your understanding of it, and it will keep running its loops whether you observe them or not.

But the observation matters. The gap matters. The moment where you notice the prediction, notice the error, notice the old model firing, and choose; not from compulsion but from something closer to freedom; that moment is what all of this is for.

Your prediction engine was trained in an environment you didn’t choose, by a nervous system that was doing its best, with building materials it had on hand. The model it built was the best available response to those specific conditions. That model carried you here. It kept you alive. It got you through environments that required exactly the strategy it provided.

And now you’re in a different environment. With a partner, or seeking one, or recovering from one. With a nervous system that’s still running the old code but is now, maybe for the first time, watching it run. With a framework that says: the code can be revised. Not easily. Not all at once. But the architecture is plastic. The weights can shift. The blanket can be mapped, and the leaks can be found, and the holding environment can be built or rebuilt or found in a place you didn’t expect.

The prediction engine is you. And you are reading your own source code.

Cause someone has to make this shit look cool.